The AI Thinking Gap — Why We Have the Tools but Not the Thinking to Use Them

Organizations are spending billions on AI tools — but without a thinking framework, usage stays stuck at the lowest cognitive levels. This is where real transformation begins.

Jasem Neaimi

AI Collaboration Researcher

Nearly every business leader today expects AI to fundamentally transform jobs in their organization. Survey after survey confirms it — the overwhelming majority see AI reshaping their workforce within the next year.

Microsoft, Nvidia, and Google are committing billions. Organizations are racing to adopt. Budgets are doubling year over year.

The infrastructure is coming. The investment is here. The strategy is clear.

But ask most professionals — in banking, real estate, government, healthcare — one simple question: How should I think with AI?

And you'll get silence.

The Infrastructure Isn't the Gap

AI strategy is accelerating everywhere. Every sector is moving. Every boardroom is asking. This isn't a trend. It's a global shift.

But there's a gap between building AI infrastructure and building AI thinking.

Consider what's happening on the ground:

- Companies are adopting AI tools but struggling to see returns. "Most AI projects in the enterprise space don't deliver what they promise" — a sentiment echoed across LinkedIn by consultancies worldwide.

- Prompt engineering initiatives are training people on input — but not on judgment.

- Job postings for "Director of AI" and "Head of AI Strategy" are multiplying — but the people filling those roles often have no framework for deciding when AI should lead and when humans should lead.

This is the AI thinking gap: leaders expect transformation but lack a structured way to think about it.

Why Tools Aren't Enough

Most AI adoption follows a predictable pattern:

- Buy a tool (ChatGPT, Copilot, a custom model)

- Train people to use it (prompt engineering workshops, vendor demos)

- Deploy it on tasks (drafting emails, summarizing reports, generating code)

- Wonder why ROI is flat (it feels faster but nothing structural changed)

This pattern fails because it treats AI as a tool when it should be treated as a collaboration partner. And collaborations need structure.

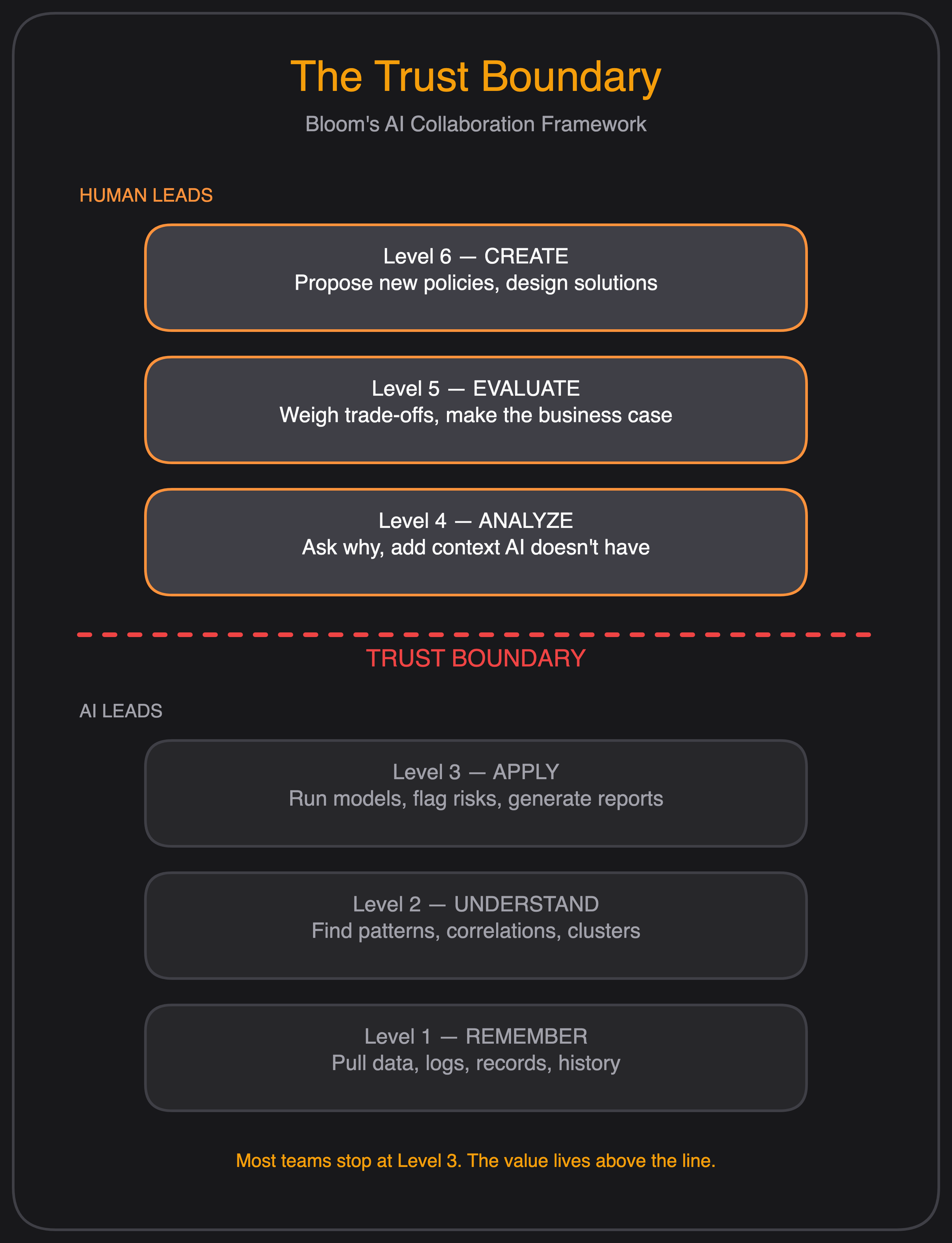

In Bloom's Taxonomy — a 60-year-old cognitive science framework — there are six levels of thinking: Remember, Understand, Apply, Analyze, Evaluate, Create.

AI excels at the first three. It remembers everything. It understands patterns in text. It applies known solutions to familiar problems. This is where most organizations stop — they hand AI a Level 1-3 task and call it transformation.

But the real value lives at Levels 4-6. Analyzing which AI output matters for your context. Evaluating whether the recommendation aligns with your strategy. Creating new solutions that combine AI's speed with human judgment.

Nobody is teaching this split. And without it, most organizations stays stuck at the bottom of the cognitive stack.

What This Looks Like on a Tuesday Morning

Let me show you what the difference looks like in practice — through a scenario that illustrates how the framework changes the approach to the same problem.

A logistics company's delivery complaints are rising.

A fleet manager at a mid-size delivery company notices customer complaints about late deliveries doubled in the last two months. The team is under pressure to fix it.

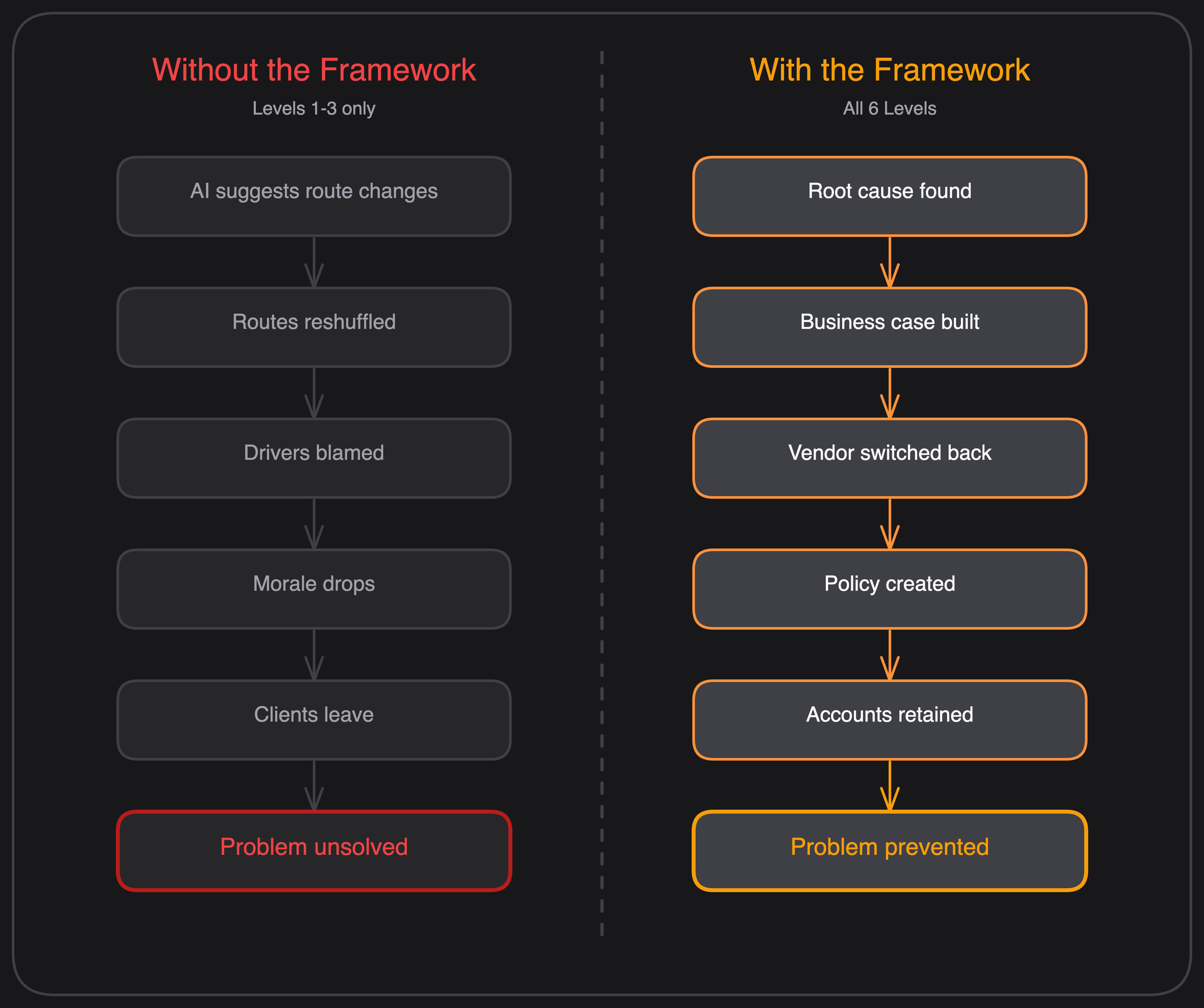

How most teams use AI today (Levels 1-3 only):

- Feed delivery data into an AI route optimizer

- AI suggests shorter routes and reassigns drivers

- Generate a report showing "optimized delivery windows"

Result: routes look better on screen, but complaints keep coming. Management blames the drivers. Morale drops. Nothing changes.

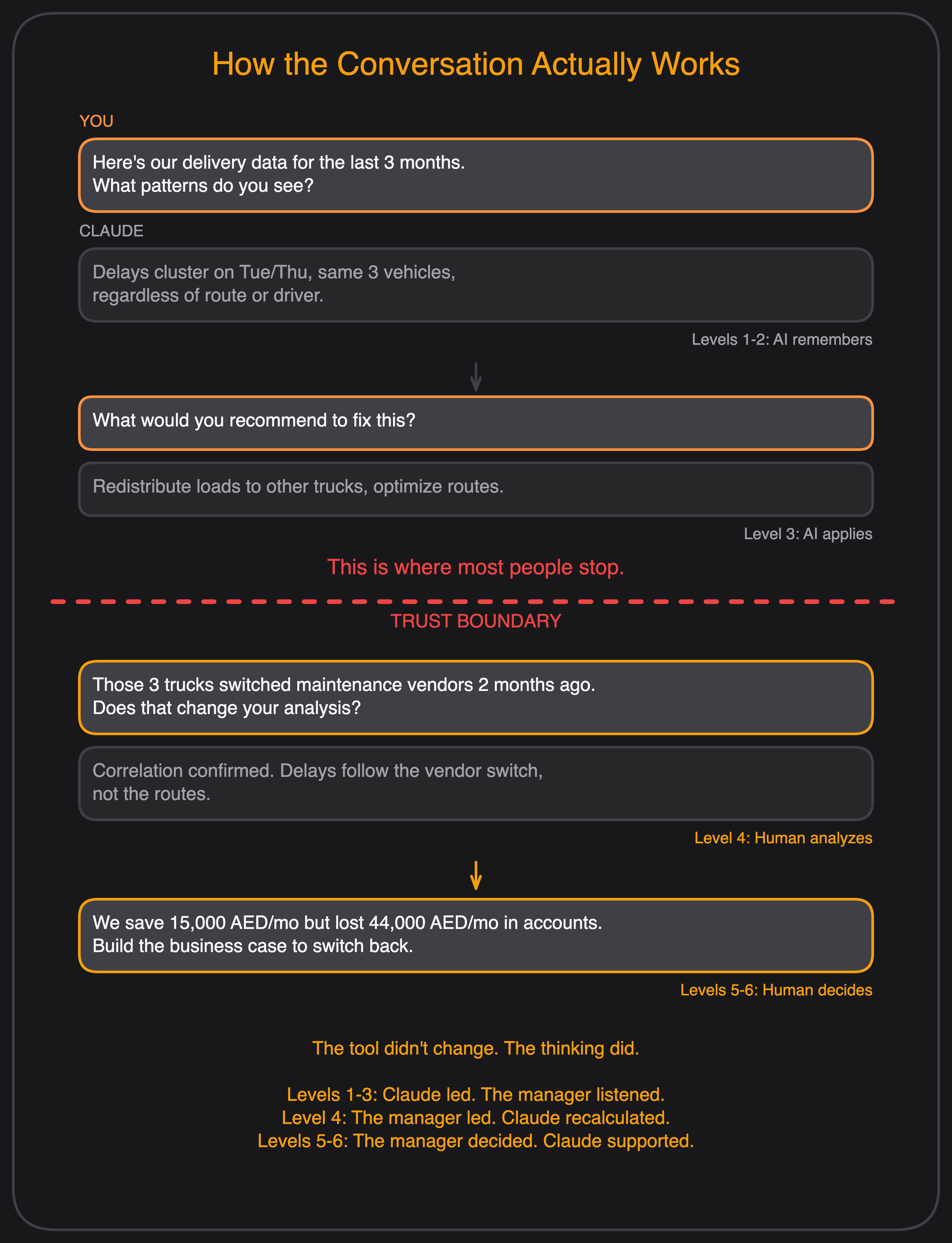

How the same team works using the framework — with Claude:

The fleet manager doesn't jump to a fix. They open Claude and work through the problem.

Level 1-2 — Let AI Remember and Understand

The manager pastes their delivery data, driver logs, and complaint records into Claude and asks:

"Here's our delivery data for the last 3 months. What patterns do you see?"

Claude finds that late deliveries cluster on Tuesdays and Thursdays, mostly from the same 3 vehicles, regardless of which driver or route is assigned.

Level 3 — Let AI Apply

The manager follows up:

"What would you recommend to fix this?"

Claude suggests redistributing those vehicles' loads to other trucks and optimizing routes to compensate.

This is where most people stop. The AI gave an answer. It sounds logical. Ship it.

The Auditability Boundary

Level 4 — The Human Analyzes

But the manager doesn't accept the answer. They know something Claude doesn't — those 3 trucks were moved to a cheaper maintenance provider two months ago. Right when complaints started.

They test this with Claude:

"Those 3 trucks switched maintenance vendors 2 months ago. Does that change your analysis?"

Claude recalculates — now the correlation is clear. Maintenance quality dropped, vehicles are underperforming, and the delays follow the vendor switch, not the routes.

Level 5 — The Human Evaluates

The manager asks:

"The new vendor saves us 15,000/mo but we've lost two accounts worth 44,000/mo. Build the business case to switch back."

Claude builds a cost comparison, projects the impact over 6 months, and drafts talking points for the manager's meeting with leadership.

Level 6 — The Human Creates

The manager now has the insight and the business case. They propose a new policy: any vendor change that affects fleet operations gets a 30-day performance review before full rollout. They ask Claude to draft the policy document and the KPIs to track it.

The Real Cost of Stopping at Level 3

Look at the numbers from the logistics scenario:

The Level 1-3 team reshuffled routes — blamed drivers, lost morale, solved nothing. The framework team found the real cause — a cost-cutting decision on maintenance — and fixed it before losing more clients.

The fleet manager didn't need to be an AI expert. They didn't need a data science degree. They needed to know when to stop trusting the dashboard and start asking why.

The tool didn't change. The thinking did.

The Auditability Boundary in Enterprise

In the Bloom's AI Collaboration Framework v2, the line between Level 3 (Apply) and Level 4 (Analyze) is the auditability boundary. (v1 called it the "trust boundary"; v2 corrects the name — the boundary is about whether an external referent exists, not whether AI is reliable.)

Below the boundary, an external referent exists — a source, a document, a runtime, a spec — so AI output is checkable against that referent. Above it, no referent exists outside the human's context, values, and judgment, so the human must lead.

Here's what this looks like in practice across industries:

Banking:

- Below the boundary: AI summarizes regulatory documents, flags transaction anomalies, generates compliance reports. (Levels 1-3 — AI leads)

- Above the boundary: Deciding which regulatory risk matters most to your institution right now. Evaluating whether the anomaly detection model reflects real fraud patterns or historical bias. (Levels 4-5 — Human leads)

Real Estate:

- Below the boundary: AI prices properties based on comparable data, generates listing descriptions, predicts demand. (Levels 1-3)

- Above the boundary: Evaluating whether the pricing model accounts for current market conditions. Deciding which development projects to prioritize when market conditions shift. (Levels 4-5)

Government:

- Below the boundary: AI processes citizen requests, translates documents, generates policy summaries. (Levels 1-3)

- Above the boundary: Analyzing whether an AI-powered public service is equitable across demographics. Evaluating the societal impact of an AI-driven decision. (Levels 4-6)

In every case, the organizations that succeed are the ones that know where the boundary is — and staff it with human judgment. v2 also makes the boundary dynamic: it moves up as consequences grow, the domain is novel to AI, or verification is expensive.

The 6+3 Questions for Enterprise AI

Before any AI deployment, the framework asks six evaluative questions — and three more for high-commitment bets (full set in v2):

| # | Question | Enterprise Application |

|---|---|---|

| 1 | What is the purpose? | Why are we deploying AI here — to cut costs, improve quality, or because everyone else is? |

| 2 | What does each side bring? | What does AI do faster — and what do we still need human expertise for? |

| 3 | What does success look like? | How do we measure AI's impact beyond "it feels faster"? |

| 4 | What am I afraid of? | What's the worst outcome if AI gets this wrong? Can we recover? |

| 5 | What's the scope? | Where exactly does AI lead, and where does a human take over? |

| 6 | What's the commitment level? | Are we piloting or betting the business on this? |

| 7 | What are we assuming? (v2) | Which premises about data, users, or compliance haven't been tested yet? |

| 8 | Is this decision reversible? (v2) | If this AI deployment fails, can we roll back without strategic damage? |

| 9 | What would change our mind? (v2) | What signal — a metric, an incident, a regulatory move — would make us stop or pivot? |

Most failed AI projects skip questions 1, 4, and 5. They deploy first and evaluate later — which is bottom-up thinking applied to a top-down problem. The v2 epistemic round (7–9) catches the deeper failure: betting the business on a premise nobody named.

Where to Start

If you're a leader facing the AI thinking gap, here's where the framework suggests you begin:

- Map your team's AI tasks to Bloom's levels. If everything falls in Levels 1-3, you're using AI as a tool, not a collaborator.

- Identify where human judgment is missing. Every AI deployment needs a human at Level 4+ making the decisions AI can't.

- Ask the 6+3 questions before your next AI project. Not after. Before. For high-commitment bets, the epistemic round is mandatory — it surfaces the assumptions that would otherwise become tomorrow's incident report.

The investment is here. The thinking has to catch up.

Get new insights

Subscribe for the latest research and frameworks, delivered to your inbox.