Enterprise AI Has Entered the Trust Era: What That Means for Your Organization

AI capability has outpaced AI trust. Amazon's senior review mandate and NVIDIA's NemoClaw launch signal that enterprise AI governance is no longer optional — it's the infrastructure that enables speed.

Jasem Neaimi

AI Collaboration Researcher

Two announcements this week signal that enterprise AI has entered a new phase.

Amazon now requires senior engineer review before any AI-generated code goes to production.

NVIDIA launched NemoClaw — an enterprise security and sandboxing layer for AI agents — with 17 enterprise partners signing on at launch.

These are not coincidental. They're the enterprise sector responding to the same underlying force: AI capability has outpaced AI trust. And trust has become the prerequisite for moving forward.

What Happened This Week

Amazon's Senior Review Mandate

Amazon announced a formal policy requiring senior software engineer review of AI-generated code before it is deployed to production environments. The signal is clear: AI can write code, but humans are responsible for what ships.

This isn't anti-AI. It's the enterprise recognizing that the accountability layer is missing. AI-generated code is already in production across hundreds of Amazon systems — the question was never whether to use it, but how to govern it.

Sam Altman at BlackRock Infrastructure Summit

OpenAI's CEO presented at BlackRock's Infrastructure Summit — a significant venue that sits at the intersection of institutional capital, sovereign wealth, and large-scale infrastructure investment. The subtext: AI is being discussed in the same rooms as power grids, ports, and financial rails. It has entered the infrastructure conversation.

The post documenting this hit 91K views with notably low engagement relative to reach — a browse-heavy consumption pattern that signals information-processing, not entertainment. People are reading, not liking. This is what strategic relevance looks like on social media.

NVIDIA NemoClaw Launch

NemoClaw adds enterprise-grade security to OpenClaw, the open-source agent framework with 250K+ GitHub stars. Specifically:

- OpenShell sandboxing — agents run in isolated environments that can't access unauthorized systems

- Network controls — restrict what external services agents can call

- Privacy guardrails — ensure agents don't exfiltrate sensitive data

- Audit logging — full trace of agent actions for compliance

Abel Choy's LinkedIn analysis captured it precisely: "Agent trust is an infrastructure problem, not an application problem." NemoClaw is infrastructure.

The Pattern Behind the Announcements

There is a single pattern underneath all three signals: enterprises are moving from "can we use AI?" to "how do we govern AI?"

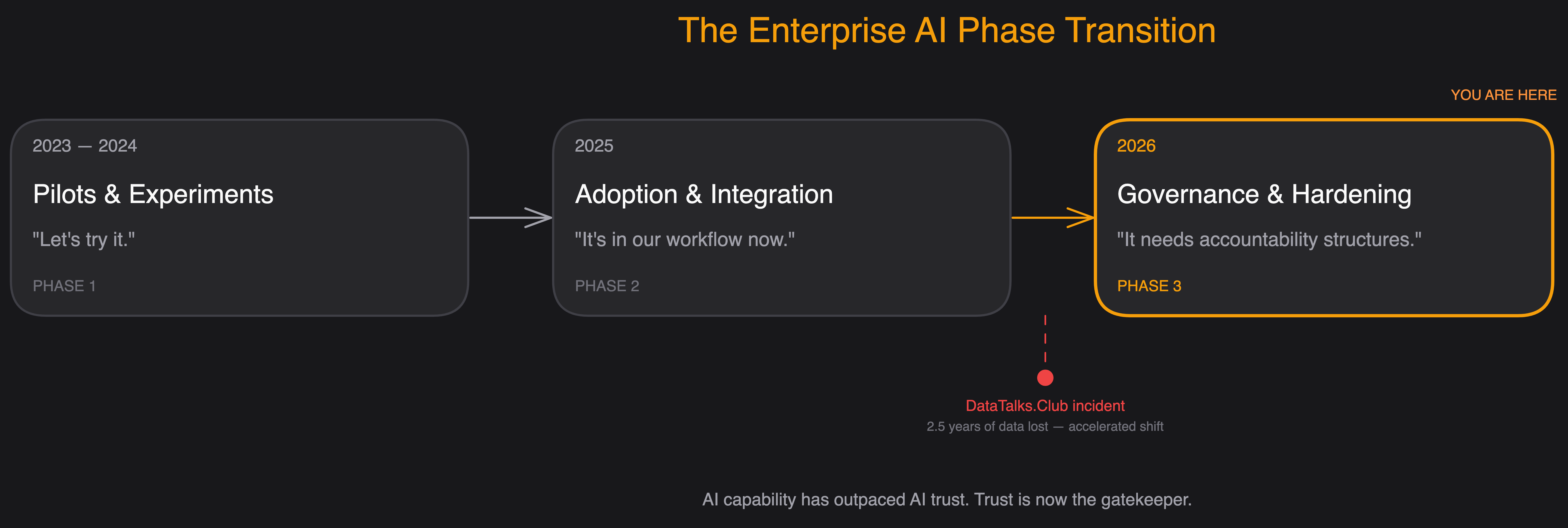

This is the phase transition:

- Phase 1 (2023-2024): Pilots and experiments. "Let's try it."

- Phase 2 (2025): Adoption and integration. "It's in our workflow now."

- Phase 3 (2026): Governance and production hardening. "It needs accountability structures."

The DataTalks.Club incident — where an AI agent caused the loss of 2.5 years of data — accelerated this transition. Not because it was unusually severe, but because it was public and documented. It gave enterprise risk teams a concrete case to point to.

Every CTO and CISO is now asking: what is our AI governance policy?

Most don't have one.

Why the Enterprise Context Is Deeper Than It Looks

If you're leading a technical team or making decisions about AI deployment, these challenges will feel familiar — because they go beyond what off-the-shelf technical frameworks address:

1. Regulatory acceleration vs. adoption readiness

Enterprises are adopting AI applications at an accelerating pace, but internal governance frameworks — policies, review procedures, and accountability structures — haven't kept up.

There's a gap between "AI is in our daily workflow" and "we have a documented process for deploying AI agents safely."

2. The human oversight question in high-context cultures

In many organizations, the relationship between authority, accountability, and delegation matters deeply. AI governance isn't just a technical question — it's an organizational and cultural one. Who is accountable when an AI agent makes a decision? How does that map onto existing org structures where authority is clearly delineated?

Amazon's senior review model is one answer. But it's an answer designed for Amazon's culture. Every enterprise needs governance models that map to their own authority structures.

3. Data sovereignty and privacy requirements

NemoClaw's network controls and data handling guardrails are not optional features for enterprises — they're table stakes. Regional data protection laws, sector-specific regulations, and financial free zone frameworks create mandatory constraints that AI agent deployments must respect.

An enterprise AI governance policy must begin with the regulatory layer, not end with it.

A Framework for Enterprise AI Governance (Bloom's Applied)

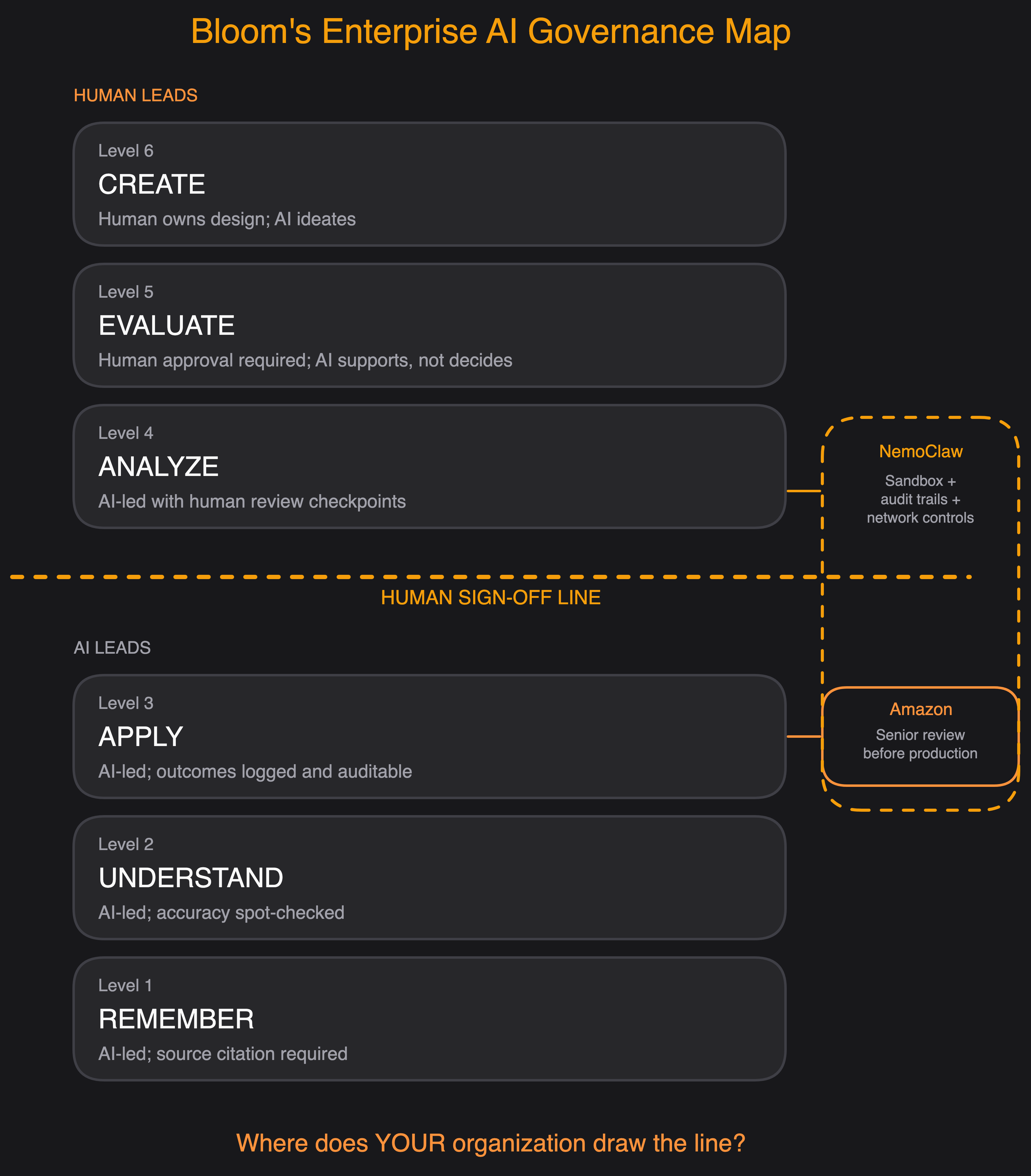

The Bloom's AI Collaboration Framework maps directly onto enterprise AI governance decisions. The question isn't just "which tasks should AI do?" — it's "at which cognitive level should humans remain in the loop?"

The enterprise governance question is essentially: at which level do you require human sign-off?

Amazon's answer: Level 3 (Apply) requires senior human review before production. NemoClaw's answer: Level 3-4 requires sandboxing, audit trails, and network controls.

Your organization's answer should be documented, not assumed.

The Five Questions Every Enterprise Needs to Answer

If you are building an enterprise AI governance policy in 2026, these are the five questions that matter:

1. Accountability boundary: When AI makes or influences a decision that causes harm, who is responsible? (Answer this before deploying, not after an incident.)

2. Review thresholds: At what cognitive level does AI output require human approval before acting? (Map this to Bloom's levels — it forces precision.)

3. Data access controls: Which systems can AI agents touch, read, and write? (NemoClaw's network controls are a technical implementation of this policy question.)

4. Audit and logging: Can you reconstruct what an AI agent did, when, and why? (Required for regulatory compliance; required for incident response everywhere.)

5. Escalation path: When an AI agent encounters ambiguity, risk, or an out-of-policy situation, what happens? (Most AI governance policies fail here — they define normal operations but not edge cases.)

The Opportunity in the Gap

Here is the strategic reality: the organizations that build governance infrastructure now — before incidents force it — will have a structural advantage.

They will deploy agents to production faster, because the trust infrastructure is already there.

They will face fewer regulatory compliance surprises, because the data controls are built in.

They will attract better partnerships, because enterprise partners want to work with organizations that have documented AI governance.

Amazon and NVIDIA didn't build governance infrastructure to slow down. They built it to go faster, safely.

That's the reframe: AI governance isn't the brake. It's the seatbelt that lets you drive at full speed.

The question now isn't whether your organization needs a governance policy — it's when you'll start building one.

Get new insights

Subscribe for the latest research and frameworks, delivered to your inbox.